This is Part 1 of a three-part series on LLMOps.

Your MLOps Playbook Won't Work Here

I've seen a few teams try to shoehorn LLM deployments into their existing MLOps frameworks. It makes sense on paper, a lot of the concepts and requirements overlap. You've got models, you've got inference, you've got monitoring. Same problems, same solutions, right?

The first time you try to version a prompt template the same way you version model weights, you'll realize something's different. When your inference costs jump 10x because users started asking longer questions, you'll know this isn't traditional ML. And when someone asks you to explain why the model hallucinated a completely plausible-sounding but entirely fictional API endpoint, you'll understand that LLMOps is its own discipline.

What Makes LLMs Different

LLMs break most of the core concepts that traditional MLOps is built on.

You're Not Training Models, You're Adapting Them

In traditional ML, you start with data, train a model, and deploy it. The model object is yours. You built it. You understand its architecture because you chose it.

With LLMs, you're starting with a foundation model that someone else trained on hundreds of billions of tokens. GPT-5, Sonnet 4.5, LLaMA, Mistral, etc. These models already exist. Your job isn't to train them; it's to adapt them through fine-tuning, prompt engineering, or retrieval augmentation to accomplish the task at hand.

This changes everything about your operational model. You're not managing training pipelines and hyperparameter sweeps. You're managing prompt templates, few-shot examples, retrieval contexts, and a small number of carefully crafted data examples for fine tuning. The artifacts you version aren't just model weights; they're text files that control behavior.

Prompts Are Code Now

In traditional ML, your inputs are structured: feature vectors, images, time series data. You know the shape. You validate the schema. You can unit test the preprocessing.

With LLMs, your inputs are natural language prompts. And those prompts aren't just data; they're instructions. They're logic. They're the interface between your intent and the model's behavior.

Change one word in a prompt and you might get completely different outputs. Add a few-shot example and suddenly the model understands a pattern it missed before. Chain multiple prompts together and you've built a workflow that's part data pipeline, part control flow, part black magic.

This means prompts need to be versioned, tested, and deployed with the same rigor as code. But they're not code. They're text that influences probabilistic behavior. This makes testing somewhat difficult, and you're often testing for actions the LLM takes (like a specific tool being called), instead of reading its probabilistic output.

Retrieval Is Part of the Model

Traditional ML models are self-contained. You give them inputs, they produce outputs. The model weights encode everything the model knows.

LLMs often need addiitonal knowledge, espcially when given a task that requires current or internal data. They can't know your company's internal documentation, last week's sales figures, or the latest state of your inventory. So you build retrieval pipelines that fetch relevant context and inject it into prompts. This is the Retrieval-Augmented Generation (RAG) architecture.

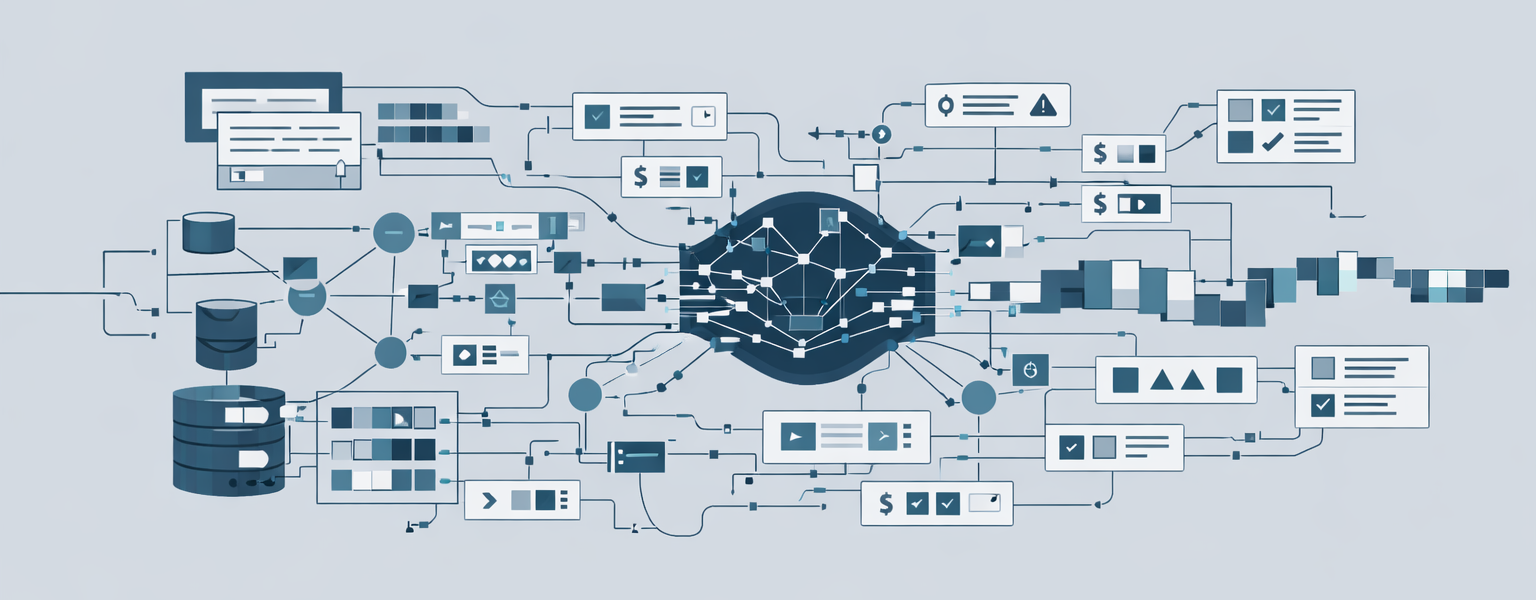

Now your "model" isn't just the LLM. It's the LLM plus the vector database plus the embedding model plus the retrieval logic plus the context injection strategy. When something goes wrong, the problem could be anywhere in that chain.

- Vector database storing embedded documents

- Embedding model converting text to vectors

- Similarity search retrieving relevant chunks

- Context injection adding retrieved text to prompts

- LLM generating responses based on augmented context

Each component needs monitoring, versioning, and maintenance. Your retrieval index goes stale? Your model's answers become outdated. Your embedding model changes? Your similarity search breaks. This is a distributed system, and the data integration pipelines feeding it are as much part of the model as the LLM itself.

Memory Isn't Just Context

Traditional ML models are stateless. Each inference is independent. You give them inputs, they produce outputs, they forget everything.

LLMs need memory to maintain coherent conversations. When a user says "tell me more about that," the model needs to know what "that" refers to. When building a multi-turn application, you're managing both short-term memory (the current conversation) and long-term memory (user preferences, past interactions, learned patterns).

Short-term memory lives in the context window, but context windows have limits. GPT-5 handles 400K tokens, Claude 200K, but even these massive windows fill up quickly in long conversations. You need strategies for what to keep, what to summarize, and what to discard. This is the "lost in the middle" problem: LLMs attend better to information at the beginning and end of the context window, with middle content receiving less attention.

Long-term memory requires external storage. You're maintaining conversation histories in databases, extracting key facts and preferences, and retrieving relevant memories when needed. This isn't just about storage; it's about memory consolidation, deciding what's important enough to persist, and preventing catastrophic forgetting where new information overwrites critical old information.

- Context window limits forcing selective memory retention

- Lost in the middle problem reducing attention to mid-context information

- Memory consolidation deciding what to keep versus discard

- Catastrophic forgetting when new information overwrites important memories

- Privacy and security concerns with storing user conversation data

- Retrieval strategies for finding relevant memories efficiently

Memory systems also introduce new operational concerns. You're managing memory namespaces to organize information by user or topic. You're implementing retention policies to comply with privacy regulations. You're tracking memory retrieval accuracy and monitoring for memory-induced hallucinations when the model references incorrect stored information.

Traditional ML doesn't deal with any of this. Your model makes predictions. It doesn't remember who asked or what they asked before. With LLMs, memory management becomes a first-class operational concern that affects quality, cost, and compliance.

Inference Costs Actually Matter

In traditional ML, inference is cheap. You run a forward pass through a model, get a prediction, move on. Even complex models typically cost fractions of a cent per inference.

LLMs are different. They're massive. Billions of parameters. And they don't just produce one output; they generate tokens sequentially. A single response might cost hundreds of times more than a traditional ML inference.

This means cost becomes a first-class operational concern. You need to track tokens per request, cost per user, latency per query. You need to optimize prompt length, implement caching, consider smaller models for simpler tasks. You need to set budgets and alerts because a poorly designed prompt could cost you thousands of dollars before you notice.

Evaluation Is Subjective

Traditional ML has clean metrics. Accuracy. Precision. Recall. F1 score. AUC. You run your model on a test set, calculate the numbers, and you know how well it performs.

How do you measure whether an LLM's response is good? Is it fluent? Relevant? Coherent? Factually correct? Appropriately cautious about uncertainty? Free of bias? Aligned with your brand voice?

These are metrics which are difficult to calculate automatically. You need human evaluation. You need rubrics. You need to define what "good" means for your specific use case. And even then, two evaluators might disagree.

This makes A/B testing harder, regression detection fuzzier, and quality assurance more expensive. You can't just run a test suite and call it done.

Why This Matters for Production Systems

These differences aren't academic. They have real operational implications.

Your Deployment Pipeline Needs to Handle Text Artifacts

You're not just deploying model weights. You're deploying prompt templates, few-shot examples, retrieval indices, chain configurations. These artifacts interact in complex ways. A prompt change might require a retrieval index update. A model version change will often "break" existing prompts because its probabilistic outputs will be different.

Your CI/CD pipeline needs to version all of these together, test them as a system, and deploy them atomically. That's harder than it sounds.

Your Monitoring Needs to Track Generative Behavior

Traditional ML monitoring tracks prediction distributions, feature drift, and error rates. Those metrics still matter for LLMs, but they're not enough.

You need to monitor output length, token usage, response latency, user satisfaction ratings, hallucination frequency, safety violations, and prompt effectiveness. You need to detect when users are asking questions your system can't handle. You need to identify when your retrieval is returning irrelevant context.

And because this is a distributed system, you need to trace the flow between components. Is your RAG pipeline retrieving the right chunks for a given query? Is the embedding model producing consistent vectors? Is the context injection staying within token limits? Each boundary between components needs instrumentation so you can pinpoint where things break.

This requires new instrumentation, new dashboards, and new alerting strategies.

Your Costs Are Variable and Unpredictable

In traditional ML, inference costs are relatively stable. You know roughly how much each prediction costs, and you can forecast expenses based on traffic.

With LLMs, costs depend on input length, output length, and model size. A user asking a complex question with a long context window costs more than a simple query. A chatbot that generates verbose responses costs more than one that's concise.

You need cost controls, usage quotas, and optimization strategies. You need to balance quality against expense. Sometimes that means using a smaller model. Sometimes it means limiting response length. Sometimes it means caching common queries.

Your Risk Surface Is Larger

Traditional ML models can make wrong predictions. LLMs can generate toxic content, leak training data, hallucinate facts, amplify biases, and produce outputs that violate regulations or brand guidelines.

You need guardrails. Content filters. Human-in-the-loop approval for high-risk domains. Audit logging. Compliance frameworks. Red teaming. These aren't optional; they're table stakes for high value production LLM systems.

What LLMOps Actually Means

LLMOps isn't just MLOps with a new acronym. It's a distinct operational discipline that addresses the unique challenges of deploying and maintaining large language models in production.

- Prompt lifecycle management: Versioning, testing, and deploying prompt templates and chains

- Retrieval pipeline operations: Managing vector databases, embedding models, and context injection

- Memory management: Handling context windows, conversation history, and long-term memory storage

- Cost and latency optimization: Tracking token usage, implementing caching, and selecting appropriate model sizes

- Generative quality monitoring: Evaluating fluency, relevance, factuality, and safety

- Feedback loop integration: Capturing user ratings and using them to improve prompts and retrieval

- Safety and governance: Implementing guardrails, audit logging, and compliance controls

These capabilities build on MLOps foundations but extend them in ways that traditional ML systems don't require. You still need model versioning, deployment pipelines, and monitoring. But you also need prompt and guardrails versioning, retrieval index management, and token budget controls. You also need to figure out how to test a system that is inheriently non-deterministic and not idempotent.

The Organizational Shift

LLMOps also changes how teams work. You can't just hand LLM operations to your existing ML engineering team and expect it to work.

You need prompt engineers who understand how to coax desired behavior from language models. You need domain experts who can evaluate whether responses are factually correct. You need governance specialists who understand the regulatory and ethical implications of generative AI.

These roles don't exist in traditional ML teams. And the collaboration patterns are different. Prompt engineers need tight feedback loops with users. Domain experts need to be involved in evaluation. Governance can't be an afterthought; it has to be baked in up front because it can inject changes into the LLM outputs, which in turn would require refactoring the prompt.

This means LLMOps isn't just a technical challenge; it's an organizational one. You need cross-functional teams, clear ownership, and processes that support rapid iteration while maintaining safety and quality.

Why You Should Care

If you're building or deploying LLM-powered applications, you're going to run into these challenges whether you call it LLMOps or not. The question isn't whether you need these capabilities; it's whether you build them intentionally or discover them through painful production incidents.

Teams that treat LLMs like traditional ML models end up with brittle systems, unpredictable costs, and quality problems they can't diagnose. Teams that invest in LLMOps early build systems that are reliable, cost-effective, and maintainable.

The difference isn't just technical sophistication. It's understanding that LLMs are fundamentally different from traditional ML, and that difference demands a different operational approach.

What's Next?

In Part 2 of this series, I'll walk through the technical implementation of LLMOps: the tools, the infrastructure, and the workflows that make production LLM systems work. In Part 3, I'll cover measurement and evaluation: how to know if your LLM system is actually working.